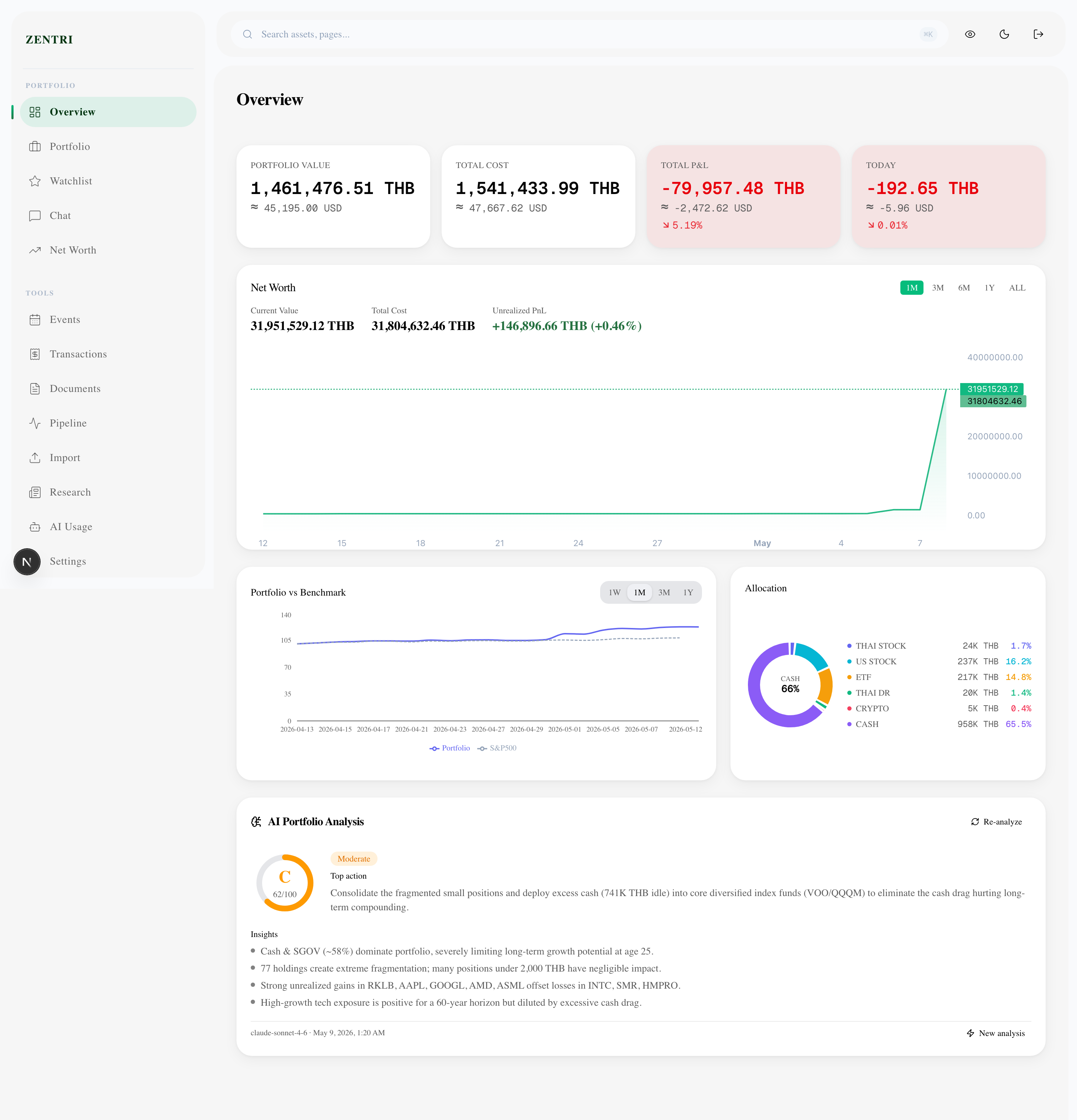

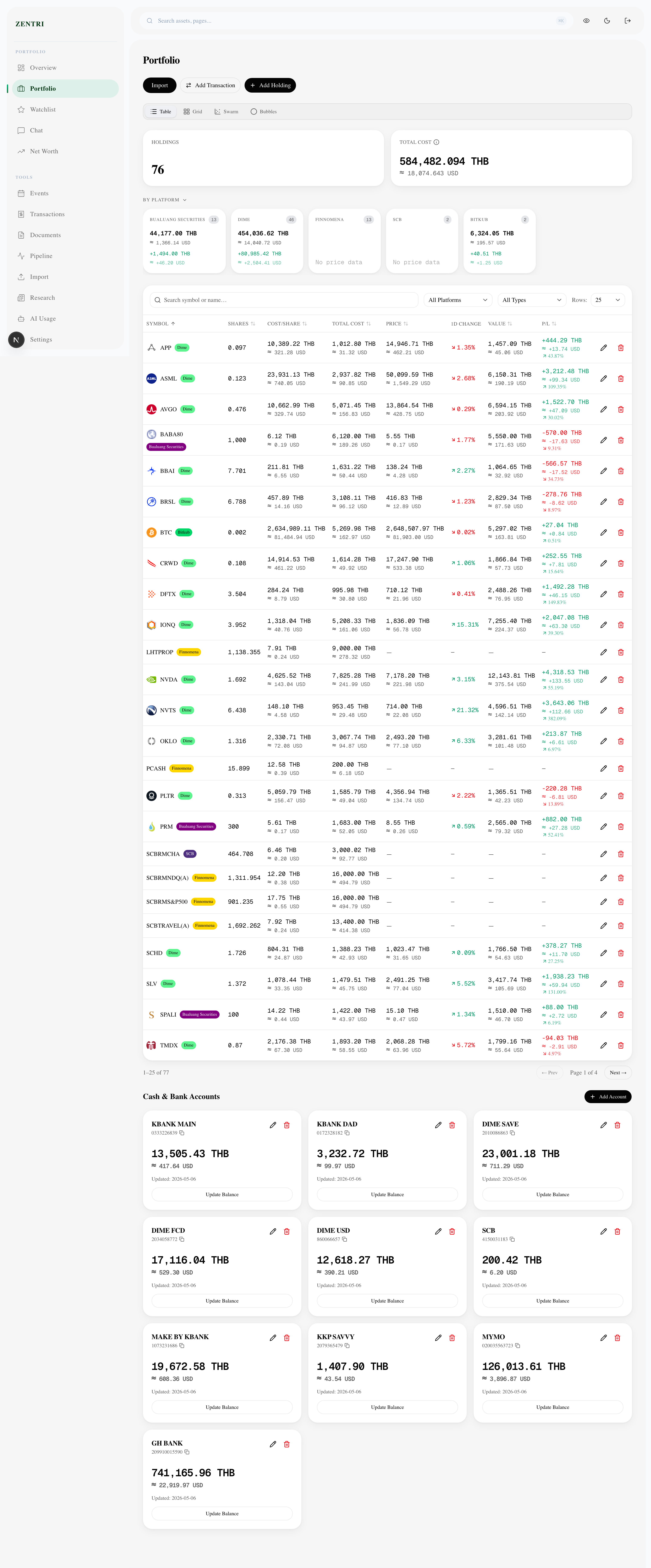

Zentri: AI-Powered Financial OS

An open-source, privacy-first financial OS that aggregates assets across Thai stocks, US equities, crypto, mutual funds, and gold — then uses LLMs to deliver institutional-grade analysis running locally via Docker.

The Problem

Managing a portfolio across Thai stocks, US equities, crypto, mutual funds, and gold requires jumping between five different apps — none of which talk to each other, and none of which give you a plain-language answer to: what should I do right now?

Zentri is a self-hosted financial OS that aggregates everything in one place and answers that question using LLMs. It runs entirely on your machine via Docker — your data never leaves your system.

Features

- Multi-asset tracking — Thai stocks (SET), US equities, crypto, mutual funds, gold

- AI analysis — per-asset buy/sell/hold verdicts with reasoning via Claude, GPT-4, Gemini, or local Ollama

- Two-tier LLM routing — fast local model for quick scans, cloud model for deep analysis

- Document RAG — upload fund fact sheets and annual reports; LLM cites them in analysis

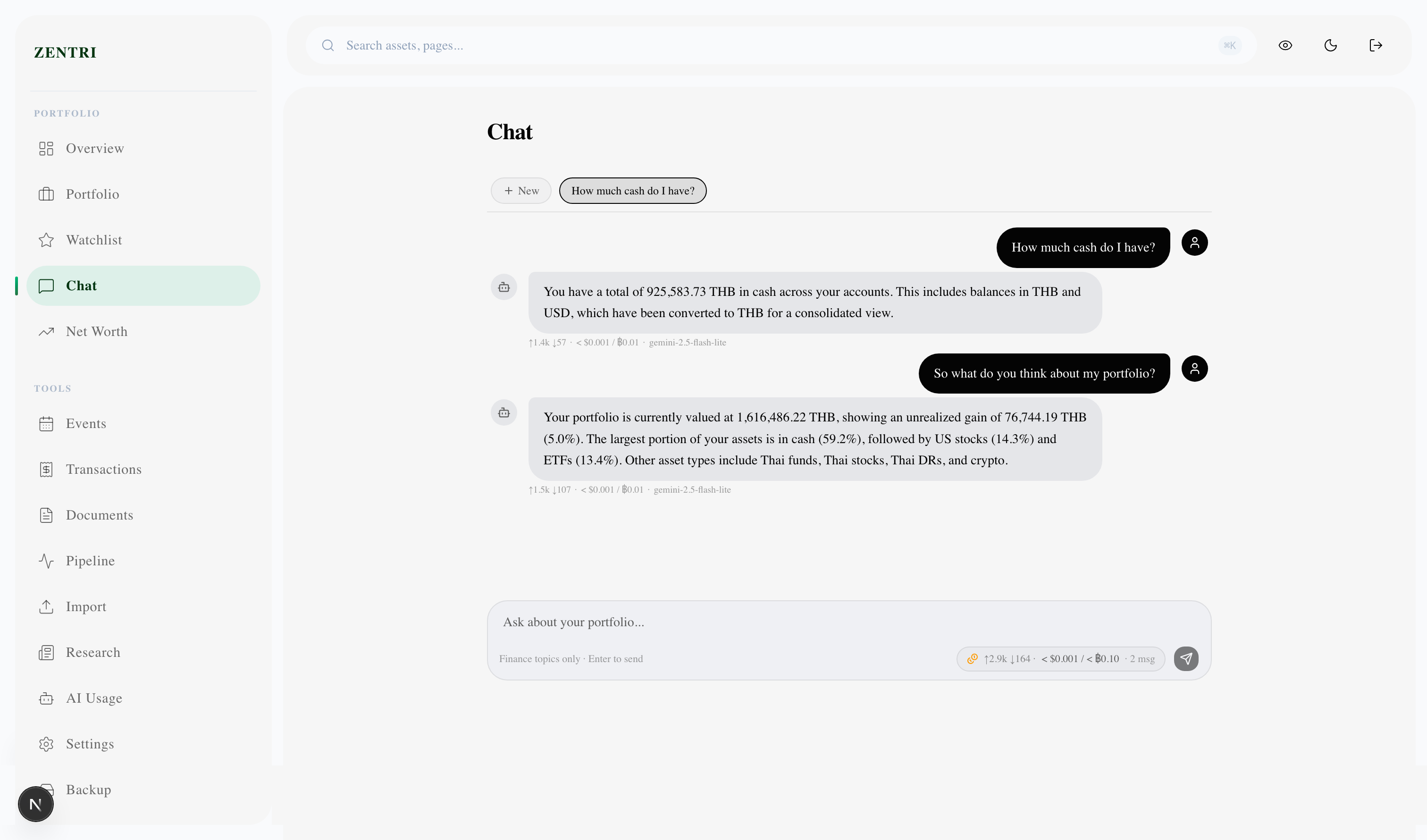

- Conversational chat — ask questions about your portfolio in natural language

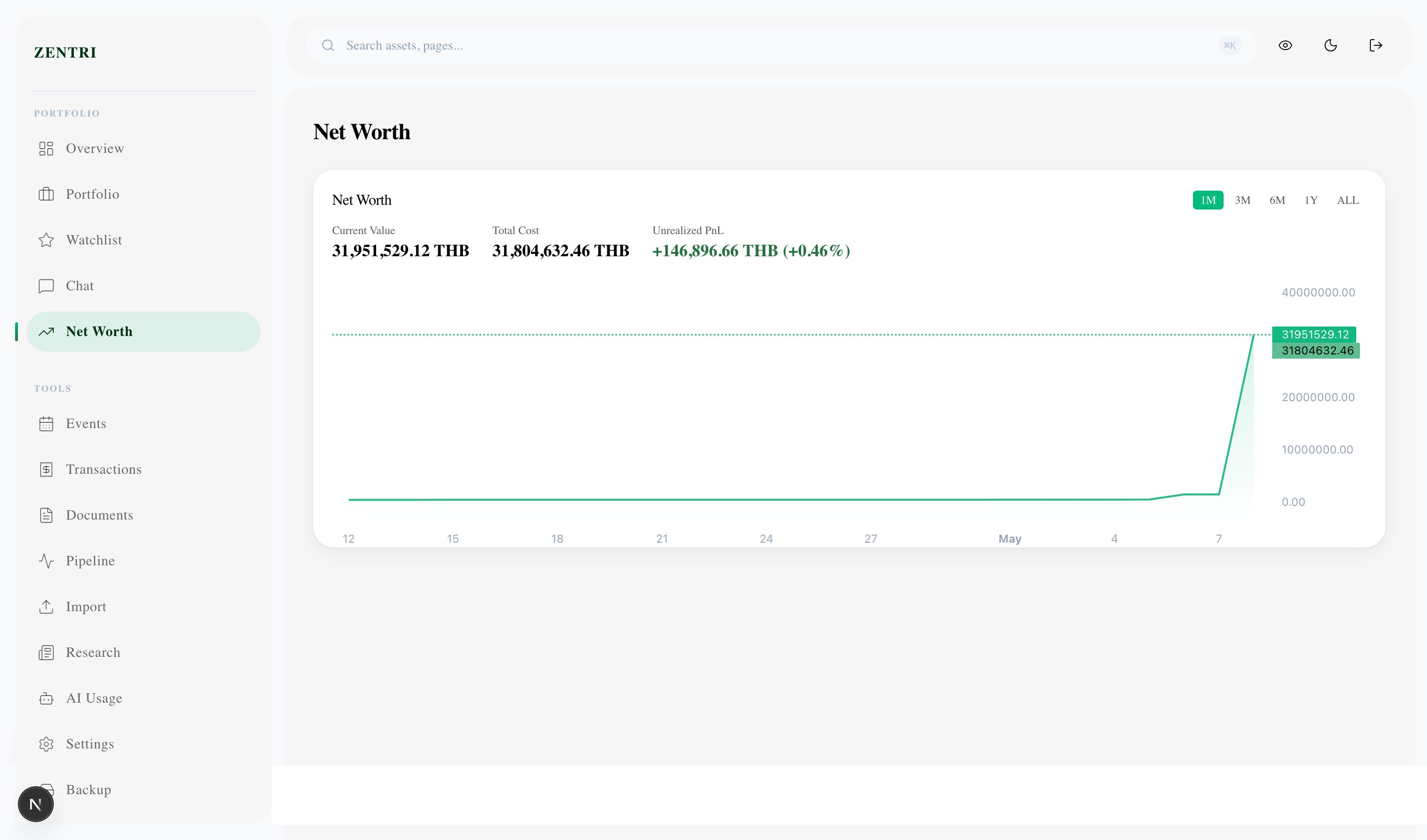

- Net worth timeline — daily snapshots of total wealth across all asset classes

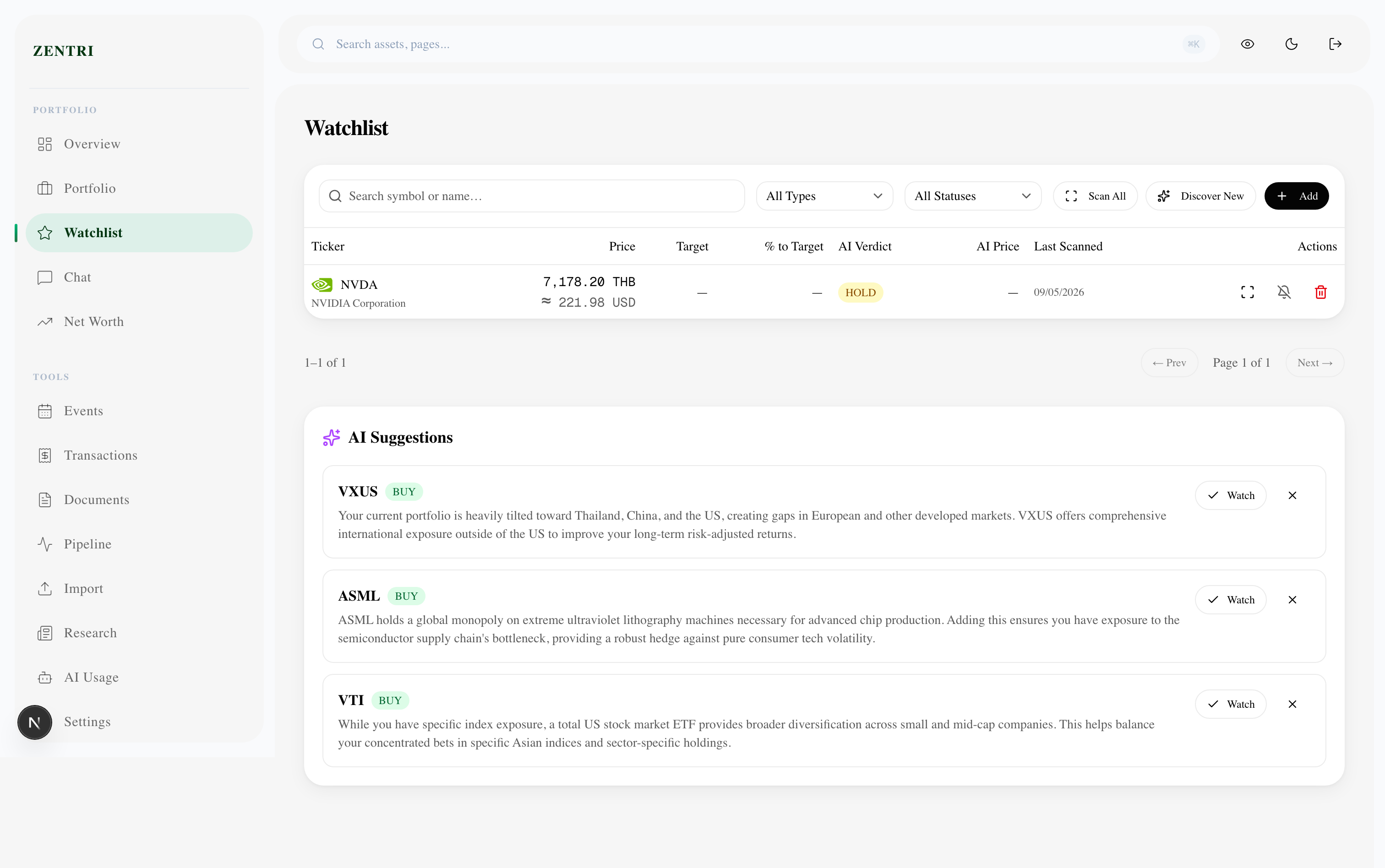

- Watchlist with AI thesis — track assets you don't own yet with AI-generated entry theses

- IPO calendar — upcoming IPOs with AI analysis

- Dividend calendar — track upcoming and received dividends

- CSV import — auto-maps broker CSV formats using LLM column detection

System Architecture

Key Technical Decisions

| Decision | Chosen | Rejected | Why |

|---|---|---|---|

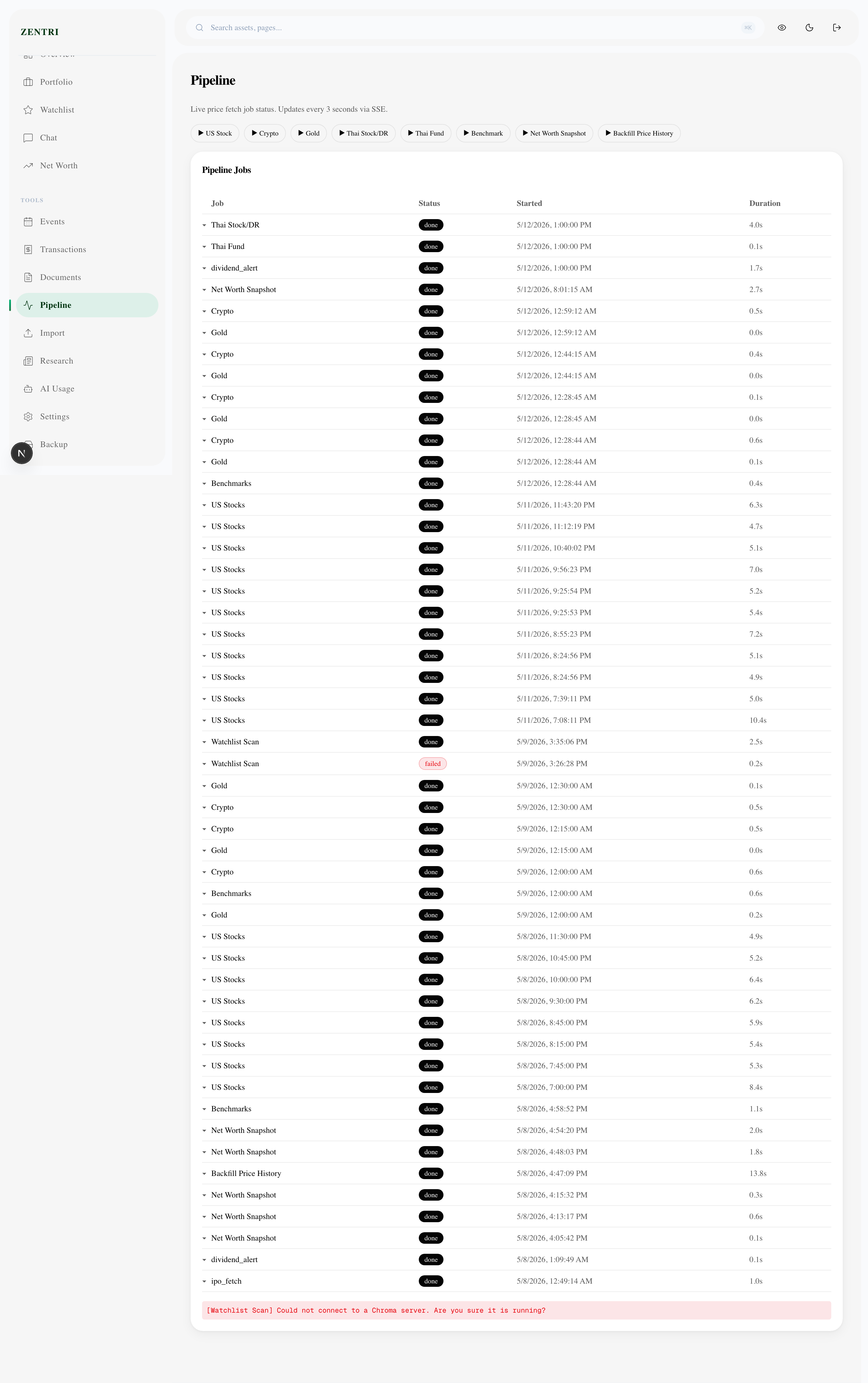

| Job queue | Redis + ARQ | Celery | Simpler, no separate broker |

| Vector store | ChromaDB | Pinecone | Local-first, zero cost |

| LLM routing | Two-tier | Single model | Cost vs quality trade-off |

| Time-series | TimescaleDB | InfluxDB | Stays in PostgreSQL ecosystem |

| Auth | JWT + bcrypt | OAuth | Self-hosted, single-user |

Screenshots

What I'd Do Differently

Start with the data pipeline before the UI. I spent two weeks building a beautiful dashboard before realising the data ingestion was unreliable — polish means nothing without trustworthy data underneath.

I'd also add end-to-end integration tests from day one. The LLM pipeline has several moving parts (queue → worker → LLM → DB), and unit tests don't catch the integration failures.